About the Competition

The Cornell Lab of Ornithology’s Center for Conservation Bioacoustics (CCB)’s mission is to collect and interpret sounds in nature. The CCB develops innovative conservation technologies to inspire and inform the conservation of wildlife and habitats globally. By partnering with the data science community, the CCB hopes to further its mission and improve the accuracy of soundscape analyses.

In this competition, you will identify a wide variety of bird vocalizations in soundscape recordings. Due to the complexity of the recordings, they contain weak labels. There might be anthropogenic sounds (e.g., airplane overflights) or other bird and non-bird (e.g., chipmunk) calls in the background, with a particular labeled bird species in the foreground. Bring your new ideas to build effective detectors and classifiers for analyzing complex soundscape recordings!

If successful, your work will help researchers better understand changes in habitat quality, levels of pollution, and the effectiveness of restoration efforts. Reliable machine listeners would also allow conservationists to deploy more recording units worldwide and would enable data-driven conservation at a scale not yet possible. The eventual conservation outcomes could greatly improve the quality of life for many living organisms—birds and human beings included.

Competition Timeline

- September 8, 2020 - Entry deadline. You must accept the competition rules before this date in order to compete.

- September 8, 2020 - Team Merger deadline. This is the last day participants may join or merge teams.

- September 15, 2020 - Final submission deadline.

Code Requirements and Evaluation

This is a Code Competition. Submissions to this competition must be made through Notebooks. In order for the "Submit to Competition" button to be active after a commit, the following conditions must be met:

- CPU Notebook <= 9 hours run-time

- GPU Notebook <= 2 hours run-time

- TPUs will not be available for making submissions to this competition. You are still welcome to use them for training models.

- No internet access enabled

- External data, freely & publicly available, is allowed. This includes pre-trained models.

- No custom packages enabled in kernels

- Submission file must be named "submission.csv"

Please see the Code Competition FAQ for more information on how to submit.

Submissions will be evaluated based on their row-wise micro averaged F1 score.

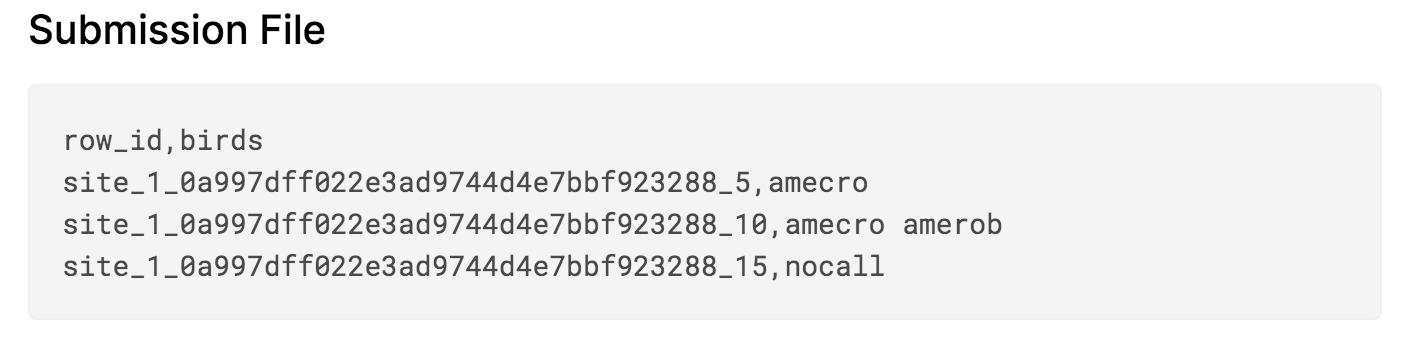

For each row_id/time window, you need to provide a space separated list of the set of unique birds that made a call beginning or ending in that time window. If there are no bird calls in a time window, use the code nocall.

There are three sites in the test set. Sites 1 and 2 are labeled in 5 second increments, while site 3 was labeled per audio file due to the time consuming nature of the labeling process.

The submission file must have a header and should look like the following:

Prizes

- 1st Place - $12,000

- 2nd Place - $8,000

- 3rd Place - $5,000